Current Status

LLM applications increasingly depend on context adaptation rather than weight adaption (i.e. fine-tuning) to improve their performance.

Challenges

Context adaption faces two main limitations:

The Brevity Bias: Prompt optimization methods have a tendency to collapse to short, generic prompts, and iterative methods produce near-identify prompts that propagate the same errors from the seed prompt.

Context Collapse: When asked to rewrite the context e2e, LLM tends to compress context into much shorter summaries, leading to loss of information and dramatic drop in performance.

Proposed Solution

We argue that contexts should function not as concise summaries, but as comprehensive, evolving playbooks—detailed, inclusive, and rich with domain insights. (2)

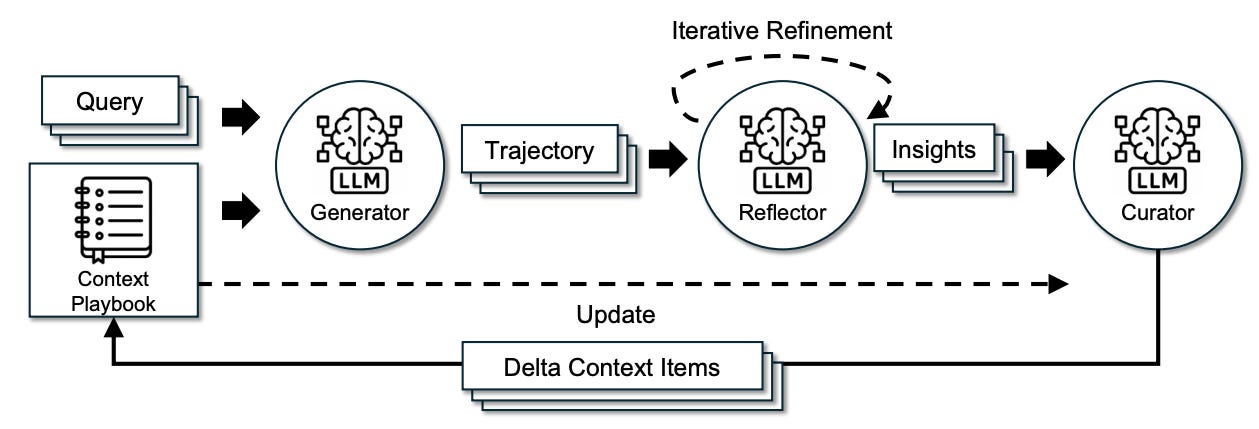

ACE (Agentic Context Engineering) is a framework for continuous context adaptation in which contexts are treated as evolving playbooks that accumulate and organize strategies over time.

The ACE workflow consists of primarily four steps:

The generator: Produces reasoning trajectories;

The reflector: Critiques traces, extract insights, and optionally refine them across multiple iterations;

The curator: Integrates these insights into structured context updates;

Grow-and-refine: To avoid the context from getting too long, a de-duplication step can be performed periodically to prune redundancy by comparing bullets via semantic embeddings.

Results

Enables self-improving agents without the need of ground truth labels as demonstrated by the 17.1% accuracy gain on the AppWorld benchmark;

Delivers non-trivial performance gains on domain-specific reasoning tasks with an average increase of 8.6% in performance;

Reduces adaption latency by 86.9% on average.

Thoughts

The agentic LLM-in-the-loop workflow involving a reflector and a curator is not a novel invention; the real innovation of this paper lies in its structural approach to storing and managing contexts. The proposed method builds on the authors’ previous work, Dynamic Cheatsheet [1], which stores LLM memory as a collection of bullet entries. By focusing only on local entries relevant to a query and making incremental updates, ACE avoids the pitfalls of brevity bias and context collapse. Simple but effective.

Instead of treating an LLM as a gigantic magic box and hoping it can process everything we throw at it, we’re increasingly seeing the value of a more structural approach. The same idea appears in the shift from the traditional “generator → evaluator” paradigm to a more nuanced “generator → reflector → curator” workflow. Having two dedicated LLMs, one for reflection and another for curation, simply works better than having a single LLM handle a composite task.

References

[0] Zhang, et al. Agentic Context Engineering: Evolving Contexts for Self-Improving Language Models. https://www.arxiv.org/pdf/2510.04618.

[1] Suzgun, et al. Dynamic Cheatsheet: Test-Time Learning with Adaptive Memory. https://arxiv.org/abs/2504.07952.